Star Trek, in its various iterations, has frequently grappled with the question of moral status, and does so perhaps most directly in “The Measure of a Man.” Broadly speaking, this is the question of who counts, morally. That is, what beings deserve moral consideration? Most everyone will agree that inanimate objects do not have such a standing. I could harm or destroy this desk, but it wouldn’t make sense to say I’ve wronged it. Conversely, most everyone will agree that human beings do have a moral status. The question is why? Is it our biological humanity? Star Trek dispenses with that possibility out of the gate. It must be something about us, but what? Life? What is life? No, what seems to make the most sense is to appeal to something like sentience, self-consciousness, or the capacity for self-determination (autonomy).

To my mind, the question becomes the most interesting in Star Trek when it relates to the status of artificially intelligent beings such as Data, or the Doctor in Voyager. Do they count? They aren’t human, or even organic, but are they persons?

With many things indicating that this question will likely be at the center of Star Trek: Picard, it seems like a good time to revisit an episode of The Next Generation that takes it head on: “The Measure of a Man.”

The central question of “The Measure of a Man” is whether Data has rights. Of course, given that as an audience we have by this point spent more than a season with him as a member of the crew, we are predisposed in the direction of thinking that he does, and that he is a person, in the same way that the rest of the crew of the Enterprise are.

So when Cmdr. Bruce Maddox arrives and keeps referring to Data as “it”, we chafe. He’s not a thing; he’s a person. But is he, though? I want to suggest that “The Measure of a Man” is at its most interesting if we can manage to take up Maddox’s perspective.

There is an android serving with a Starfleet crew. By all accounts, it is doing a great job. This is a complex machine, created not by the Federation or even another civilization, but by a rogue scientist. Surely it is worthy of study. And the possibility of gaining the knowledge necessary in order to create more androids like this would be a boon to Starfleet. Why send human beings into dangerous situations, for example, when one could send a machine?

It is important to note the extent that Data agrees with this reasoning. He is not opposed to the idea of Maddox making copies of him in principle, he is worried about the possibility that the process would involve him losing his programming because Maddox doesn’t really know what he’s doing.

His fear, in other words, is that he will lose himself insofar as his identity consists of a complex set of responses to the things he has experienced. This is what makes him Data. And if other androids were created, they would be different from him for precisely this reason. The possibility of them does not make him worry about his own identity, it is the possibility that he will be reverted to something like a blank slate, which would no longer be him.

If that makes sense to us, then we should think about the same question when it comes to human beings. What makes me who I am? Once again, brute biology seems to be the wrong answer. Sure, there are genetic factors that predispose us in certain ways. There is a level at which I am my DNA. But even if I had a twin with the same DNA, we would be different persons. Even on the biological level, epigenetic factors would move to differentiate us. But even more so, our experiences would.

And, as Data says, it is not just the facts of those experiences, but their “ineffable quality” that contributes to who we are. Our memories, in fact, can be fairly unreliable. We are prone to confabulation. Perhaps Data’s positronic brain would be better in that regard, but the point is that there is a level of meaning that goes beyond the brute facts of the matter, and Data is apparently as capable of engaging with this as we are.

So when Maddox arrives and says that he will be taking “it” to try to figure out how “it” works in the hopes of replicating “it”, he is not respecting Data as a person. He’s explicit about this. He does not view Data as a being with a moral status. If it were a box on wheels he wouldn’t be getting all of this pushback, after all.

In the background of this is a question which is actually rather difficult. Sure, in the context of the narrative of Star Trek it seems clear that Data is a person. By the time of “The Measure of a Man” we’ve gotten to know him for a season and a half of the show, and of course Picard and the rest of the crew all view him as such as well. But could this be a kind of delusion?

Maddox is right that Data was built by a human being and is a machine. The question is whether such a being might nonetheless be one with rights, and this hangs on whether an AI might fit the bill for counting morally.

What’s the standard? The Turing test doesn’t quite do the job here, although it gets close. Not being able to distinguish the machine from a human being might lead us to treat it as equal in practical terms, but this doesn’t answer the metaphysical question. After all, this is what Maddox thinks, at least for the majority of “The Measure of a Man”—that Picard and his crew have been duped by the resemblance Data has to a man. At root, though, this is just a complex set of programming in an artificial body. In Maddox’s mind, Data is no more a person than this computer that I am typing on here.

And that makes some sense. Indeed, I would expect a lot of people to think similarly in the real world. Maybe not in relation to Star Trek, since the show is at pains to convince us of Data’s personhood, but this is certainly something I have encountered talking to people about certain episodes of Black Mirror. The very fact that the being in question is artificial can lead us to think that it does not count, morally speaking.

So, again, what’s the standard?

Being human seems like a poor answer, although it is appealing to some who worry about making sure that people who are cognitively impaired, or in comas, count. Being alive seems like a poor answer, although it is appealing to some with environmental worries. Being able to experience pleasure and pain? Maybe, but it seems wrong to rule out androids simply on the basis of not being able to do so. Being aware of one’s own existence? Yes, that seems on the right track. But something having to do with freedom, or autonomy, seems even more decisive.

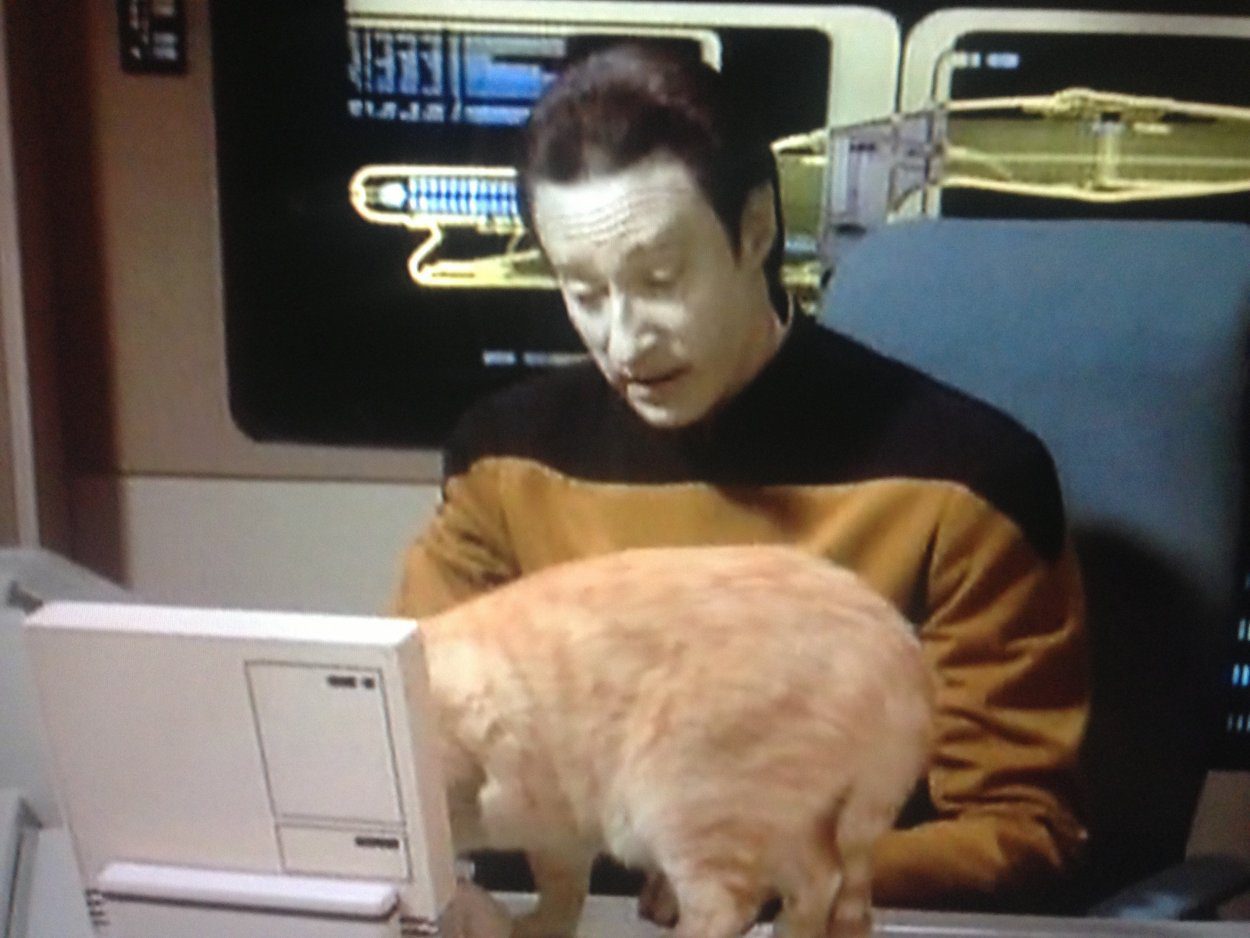

That is, the fact that my cat can feel pleasure and pain seems morally relevant. Is she self-conscious? Is she free? Honestly, I think maybe she is, but it is hard to know. Some might say it is all complex biology. But if the cat is autonomous—if she can truly decide what to do with herself at some level—then surely she counts, morally speaking. And that would mean she has rights, however attenuated or delimited.

It wasn’t this cat, but a cat I have known learned how to open a door. It’s one of those with a horizontal handle. He’d just get up on his hind legs and flip at it. We didn’t train him to do this. It was annoying, frankly, as the door didn’t lock. But he just seemed to figure it out. That seems like autonomy to me.

Of course, the fact that a being has a moral status does not directly mean it is wrong to harm it. After all, most people think it is OK to even kill a human being under the right circumstances: in self-defense, or perhaps in war, and so on. All it means is that the being in question matters in moral terms, unlike my desk here, which I’m pretty sure doesn’t.

So does Data?

In “The Measure of a Man” they have a trial because, after assessing Maddox’s plan, Data chooses to resign from Starfleet rather than submit. Does he have the right to do so, or is he just a thing that they own and can do with as they please?

Maddox thinks it’s the latter, and again, as much as Star Trek has conditioned us against this by the time the episode happens, I think it is worth thinking about the plausibility of that view.

Thankfully, so does “The Measure of a Man” insofar as the meat of the episode is the trial itself. Riker agrees to prosecute, even though he really doesn’t want to. And he makes some good points.

He does his best to prove that Data is simply a machine. He was created by a man. Look, I can take his hand off. And then, look, I can turn him off.

Data is a machine, and no one can deny that. Data doesn’t even deny that himself. But is he a person?

Picard wins the day in court by contending that while Data is a machine, so are we—just of a different type—and that while Data was indeed created by a human being, so too are we created by our parents. He then asks Data about the things he has packed: his medals, a book Picard had given him, and a hologram of Tasha. The point is that these things do not seem to serve a logical purpose.

This is interesting because the official line through Star Trek: TNG is that Data does not have the capacity for emotion. But it would seem he does value things and desire them, nonetheless. So a distinction is being made, it would seem, between what we might call affect on the one hand (what Data refers to when he talks about the “ineffable quality” of memory, for example, or what Picard gets at during this moment of the trial), and sentiment on the other. Or something like that.

Regardless, it is interesting the impact that Picard’s exchange with Data seems to have on Maddox and Louvois. We can only read their faces as Data first resists answering Picard’s question about Tasha and then confesses that they were “intimate,” but it seems like something shifts in their opinions at this moment.

This gets at another possibility when it comes to the question of moral status that has perhaps not been explored as well as it should be. Might it all hang on the capacity to care? To care about what happens to oneself, but also what happens to others? Perhaps care might strike us as a somewhat nebulous notion, and it might be hard to pin down criteria along these lines, but I find this to be one of the most intriguing suggestions that “The Measure of a Man” makes.

Picard proceeds to question Maddox as to what he takes to be the criteria for moral status. He says sentience, but further breaks that down into self-awareness, intelligence, and consciousness. Picard asks him to prove that he (Picard) is sentient, and Maddox at first balks at the question before finally beginning to engage with it. They establish that Data is intelligent and self-aware, but unfortunately leave the criterion of consciousness undefined. Is there a difference between this and self-awareness?

Regardless, all of this is enough for Picard and Data to win the day as they should. Maddox even finally calls Data “he” towards the end of the episode. But Picard also broadens the stakes in his closing argument, as he mentions the fact that if Maddox’s plan to replicate Data were to be carried out, the question they are considering wouldn’t just be about a single being, but about what might well be considered a race of them.

Indications are that by the time of Star Trek: Picard this will indeed be the case. Did the trial in “The Measure of a Man” establish a legal precedent, and did it do so to a sufficient degree? Or were perhaps decisions ultimately made by Starfleet muckety-mucks that went more in the direction of where Maddox was at the beginning of this episode, with abstract lines of reasoning leading to a conclusion that concrete experience speaks against?

I have always thought Star Trek was at its best when the antagonist was not the Romulans, the Klingons, the Dominion, the Borg, or whatever alien species, but higher-ups in Starfleet itself. These are presumably people of good will, but who are detached from the conditions on the ground that we have experienced along with the crew in question, and these episodes explore the questions that would continue to arise even in the fairly utopian world of Star Trek.

And it seems like these will be front and center in Star Trek: Picard. I can’t wait to see it go (hopefully) where no Star Trek has gone before.